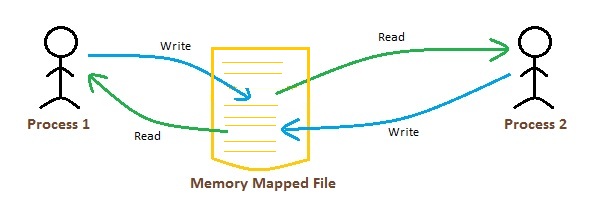

We see this commonly used with shared libraries often on many operating systems they are mapped into the address space of the program via a shared, read-only memory mapping that indicates the data should, in addition to being read-only, be executable. If we write to that address in memory we can update a potentially large file in another program instantly. We can have two processes map the same file and the operating system can potentially map pages of that file to the same locations in physical RAM thus saving overall IO bandwidth. These possibilities enable the two main features of memory mapping promised in the opening of this post, and further suggest a very efficient form of Inter-Process-Communication (IPC). When we map a file we get back a simple integer pointer not an OS stream abstraction.

The same physical page of memory can be mapped into several processes simultaneously.This can often be both simpler and faster than managing intermediate buffers of data in your own program. If we have 16GB of ram on a machine we can request the OS to memory map a file that is 100GB and it will happily do so, paging the data we read into physical memory as necessary. We can map a file much larger than physical ram.

Mapping RAM to filesystem storage has some interesting aspects that we can take advantage of to expand our possibilities when working with data: This enables both swapping (with which you are probably familiar), and memory mapping. This mapping system allows, among other things, the OS to load data from disk and place it in physical memory before your program is allowed to fully dereference the pointer. This implements what is called virtual memory - a mapping between memory that you see as a programmer and physical RAM in the system. When you dereference a pointer into that memory your program actually runs special low level code that checks the page table to see where the physical address of the requested memory lies. The OS keeps track of metadata about these pages in an associative data structure called a page table. These pages have fixed size such as 4K, 8K or 16K. When your program allocates memory the operating system allocates pages of memory. The main idea of Paging is to create a level of indirection between your program and physical RAM. There are specialized ways avoid this but we can safely ignore those for now as they do not apply to your average Java, Python, R, or C program. For the purpose of this post all access to RAM, regardless of if that access is via the JVM or a native pathway, happens via this mechanism. The operating system manages memory in this way whether that access is from a C malloc call or from a Java allocation of an array or an object. To begin, first we'll outline "paging", a slightly simplified model of how RAM is accessed in general. Memory mapping, available in all modern operating systems, allows programs to work with datasets larger than physical RAM while also allowing multiple programs running concurrently to believe they have access to the entire memory space (or more) of the machine. So, we will cover the Apache Arrow binary format, its overall architecture and base implementation and why it is such a great candidate for exactly this process. In this post, and in our work, we apply memory mapping to loading Apache Arrow 1.0 data. Ignoring it is a big mistake, akin to ignoring tremendous research that has gone into high performance data storage and access techniques. We have personally experienced a lot of success with it over the years. Memory mapping blurs the line between filesystem access and access to physical RAM in a way that benefits managing and processing large volumes of data on modern machines. This post is about a special facility for dealing with memory and files on disk called memory mapping. Memory Mapping, Clojure, And Apache Arrow Mmap, Memory, and Operating Systems

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed